Tour of Temporal: Welcome to the Workflow

Contents

I’ve been exposed to workflows early on in my career, at first in enterprise environments where advanced GUIs were used to model highly complex business workflows whose execution would invariable lead to hours of debugging through mountains of log files via a remote desktop connection to the server running the proprietary workflow execution engine and later in research projects which would invariably feature some type of home-grown workflow engine (with a shiny GUI) to solve a part of the project. These interactions with workflows left me with a rather poor after-taste of the entire concept of workflows which can be summed up in two words: slow and complicated.

So I was rather intrigued when I read that Sergey Bykov had left Microsoft (where he was leading Microsoft Orleans, a framework for “building robust, scalable distributed applications” - much like Akka) to join Temporal — especially when I read that Temporal was all about workflows, which in my mind still were these clunky, slow attempts at abstracting code behind a GUI. As it turns out, Temporal has no GUI (at least not one for building workflows) and the workflow execution isn’t slow (though it can be be long, if that is what the workflow requires).

Why workflows?

Conceptually, workflows operate at a slightly higher level of abstraction than actors. As with any abstraction this boils down to the following picture:

When you take the red pill (actor toolkits), you get to learn kung-fu and fight the agents of distributed systems. This can be a marvelous and infuriating experience at the same time, which is why people who spend a lot of time with distributed systems tend to question their sanity much more often than the average. Sometimes, operating at this level of abstraction, which is to say defining your own protocol and carefully orchestrating how messages are routed between nodes, which trade-offs are made during failure recovery etc. is necessary. In my experience this is often the case with low-latency systems where each millisecond counts.

When you take the blue pill (workflows), you get to keep your sanity focus on application code and entirely outsource the failure handling and recovery part. This doesn’t mean you can entirely ignore the execution model - as with any abstraction, having a sound understanding of the tradeoffs is key to making good use of it. However many of the underlying details are taken care off by the orchestration engine which I believe can result in tremendous gains of productivity when used in the right context.

This article aims at introducing Temporal and will make comparisons with the actor model (and actor toolkits) along the way.

Temporal workflows

Temporal is a distributed and scalable workflow orchestration engine capable of running millions of workflows. Workflows can hold state and describe which activities (workflow tasks) should be carried out. Activities are distributed using task queues and executed on worker nodes organized in a cluster. Failure handling is taken off the hands of application developers and handled by the engine.

If you’re familiar with the actor model you may notice a few similarities: entities capable of executing logic and holding state, distributed on multiple nodes, with failure handling being a first-class concern. When looking closer, a few key differences start to emerge:

- workflows do not carry out any side-effecting operations such as calling third-party services, writing to a database, uploading a file, etc. These are taken care of by activities. This is necessary for workflow execution to be deterministic, which enables them to be consistently “resumable” (more on this later)

- the layer of abstraction of workflows / activities eliminates the need for application developers to define a custom message protocol. That isn’t to say that there is no protocol, it just means that Temporal has defined it. What is left for application developers to do is to define workflow methods (with their parameters) which are then transported by Temporal (as we’ll see, parameter values need to be serializable). This is a very elegant solution: just like actors provide the illusion of single-threaded, sequential execution, workflows provide the illusion of persistent method calls.

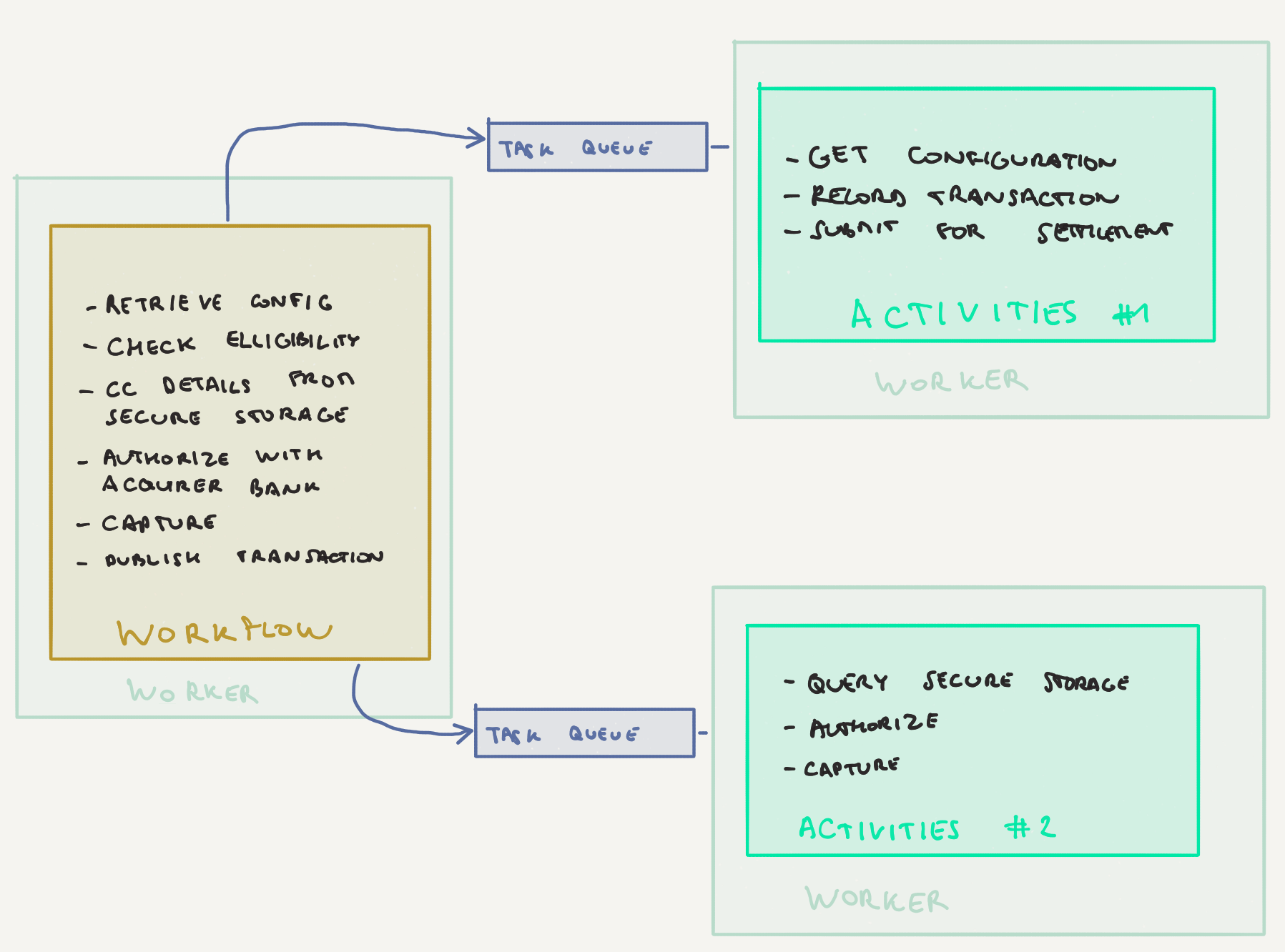

Let’s look at some code. The example in this article will be the one of a payment services provider, just like the one in Tour of Akka Typed.

A workflow definition is just a method with parameters:

| |

The @WorkflowMethod annotation is a marker for the entry point of the workflow. There can only be one for each workflow. As we’ll see later there can be other types of methods in a workflow definition used to send events to the workflow or query its state.

Similarly, activities are also defined via an interface:

| |

The implementation of the workflow interface is the one calling these activity methods. Activities are typically side-effecting - in this example, retrieveConfiguration will retrieve the payment configuration for the merchant and the user from storage and dispatchForSettlement will pass on a successfully carried out transaction to a downstream settlement service using an event bus such as Kafka.

Temporal automatically tries to execute failed activities again. In order for this process to be smooth, activities should ideally be idempotent i.e. have same-effect semantics (i.e. uploading a file twice shouldn’t raise an error the second time around), but they don’t have to. Indeed in some scenarios, carrying out an activity twice isn’t an option, for example when transferring money from one account to the other. For such cases, Temporal has built-in support for the Saga pattern which uses compensation actions to rollback to a healthy state in case of failure.

Workflow implementations

Let’s now have a look at the implementation of our workflow definition above. It needs to do 3 things:

- Retrieve the stored configuration for merchant and user

- Carry out the payment given the payment method retrieved in the configuration (credit card payments, SEPA transfer, carrier-pigeon dispatched cheque, etc.)

- Pass the resulting transaction to an external settlement process

| |

As you can see, at first sight there’s nothing all too out of the ordinary in the implementation. The only two elements that are specific to the Temporal SDK here are the use of the PaymentHandlingActivities to carry out “dangerous”, side-effecting operations and the use of a child workflow for credit card transactions (we’ll talk a bit more about child workflows in a bit). Other than that it just looks like plain code.

But is it?

Worfklows are durable!

Workflows (and activities) can crash during their execution, be it because of a programming error, an unavailable external dependency (database, third-party system) or a crash of the worker node. Failure is just a fact of life, so embracing it is a wise thing to do. Temporal’s approach here is to make the entire workflow method durable. If the execution is interrupted at any point in time / at any line in the code, Temporal will retry the execution by resuming where it left off previously. Note that this is also the raison d’être of activities which encapsulate the non-deterministic part of the execution (and that is why I mentioned earlier that ideally these activities should be idempotent).

To continue the previous comparison with actors, an equivalent approach in that model would be the one of persistent actors that are able to recover their state after a crash or after having been restarted. The difference, once again, is what this entails for application developers.

With the actor-level abstraction, developers need to define the protocol i.e. the sequence of commands and events that enable the recreation of the state by application of individual events. For example, in order to make sure that the transaction was dispatched for settlement in the workflow above, we’d define a command DispatchForSettlement and its equivalent event DispatchedForSettlement. If the actor were to crash prior to dispatching the transaction it could then rely on the absence of a flag set by the handling of DispatchedForSettlement to decide to execute this action again.

With a workflow-level abstraction (and especially the design of workflows in Temporal), the entire implementation method becomes durable. Temporal creates an internal representation of the workflow execution (the workflow steps, if you will) and captures the state and state transitions. In case of crash, it knows exactly where it has left off (i.e. which activities have been executed) and is capable of resuming where it left off previously, retrying failed activities, etc. without having to do anything special for this to happen in the application code. Failure handling is completely outsourced to Temporal.

Of course, there’s no free lunch, so there are a few things we need to do to ensure that workflow implementations run smoothly:

- don’t use global mutable state

- don’t block a workflow using

Thread.sleep - don’t use threads or other forms of asynchronous code directly in workflow implementations (the Temporal SDK exposes methods to wrap / perform asynchronous operations safely within a workflow for this)

- don’t call non-deterministic I/O operations directly inside of the workflow implementation (use activities for that)

- method parameters and any state that we may use in the workflow needs to be serializable as they’ll be sent over the wire (in the example above, the retrieval of the configuration could be executed on one worker node whilst the credit card processing in the child flow could run on an entirely different set of worker nodes.). The serialization method you use directly has an impact on performance.

If you have been using actor toolkits such as Akka, Vert.X etc. in the past, these constraints may sound familiar to you. I’ve been covering many of this type of gotchas in the Akka anti-patterns series which also shows some gotchas with classic Akka actors (most of these have been addressed in Akka Typed):

- using global mutable state

- blocking inside an actor

- wrong use of asynchronous constructs when writing actors

- using the wrong type of serialization mechanism

Temporal workflows, just as Akka actors, provides an illusion of a care-free execution environment — and in order for the illusion to work, a few rules need to be followed.

Setting up and running workflows and activities

Let’s now have a quick look at how to run our workflow. Temporal is a workflow orchestration engine - it will tell our code when to run. For this to work, it is necessary to register workflow implementations and the activity implementations this workflow needs in order to run.

First off, we need a client to communicate with Temporal. In this example we will use the docker-compose based setup.

| |

Temporal uses gRPC for communication internally and in order to communicate with the local docker instance of the Temporal server, the WorkflowServicesStubs are required. In order to get a new client, we can also configure options - in our case, we’re configuring it to use a custom DataConverter as the example is written in Kotlin and we need to configure the Jackson-based serialization to work with it.

Now that we have a client to communicate with the Temporal server, it is time to tell it about the workflows we want to run.

| |

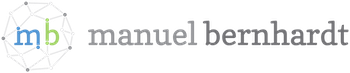

Let’s see what we have done here. For our example, to keep things simple, we’ll just have one worker to run everything: workflows and activities. We first need to create a WorkerFactory that allows to create new workers. Then, we create one worker to listen to the single TASK_QUEUE and register both our workflow implementations and both our activities implementations with it.

In a real deployment, we would’ve taken another approach. For example, we could’ve chosen to use one worker to run the workflows and separate workers for the activities. Or depending on the load on the system, we’d have one worker running the main workflow, one or several workers for the PaymentHandlingActivities and one dedicated worker running both the specialized CreditCardProcessingWorkflow and its activities.

The configuration of queues and workers is Temporal’s way to allow for scaling and resiliency. For example, if only one worker is capable of running a set of activities, then in case of crash, all workflows requiring those activities will be stuck until the worker is back up.

Finally, it is time to run our workflow:

| |

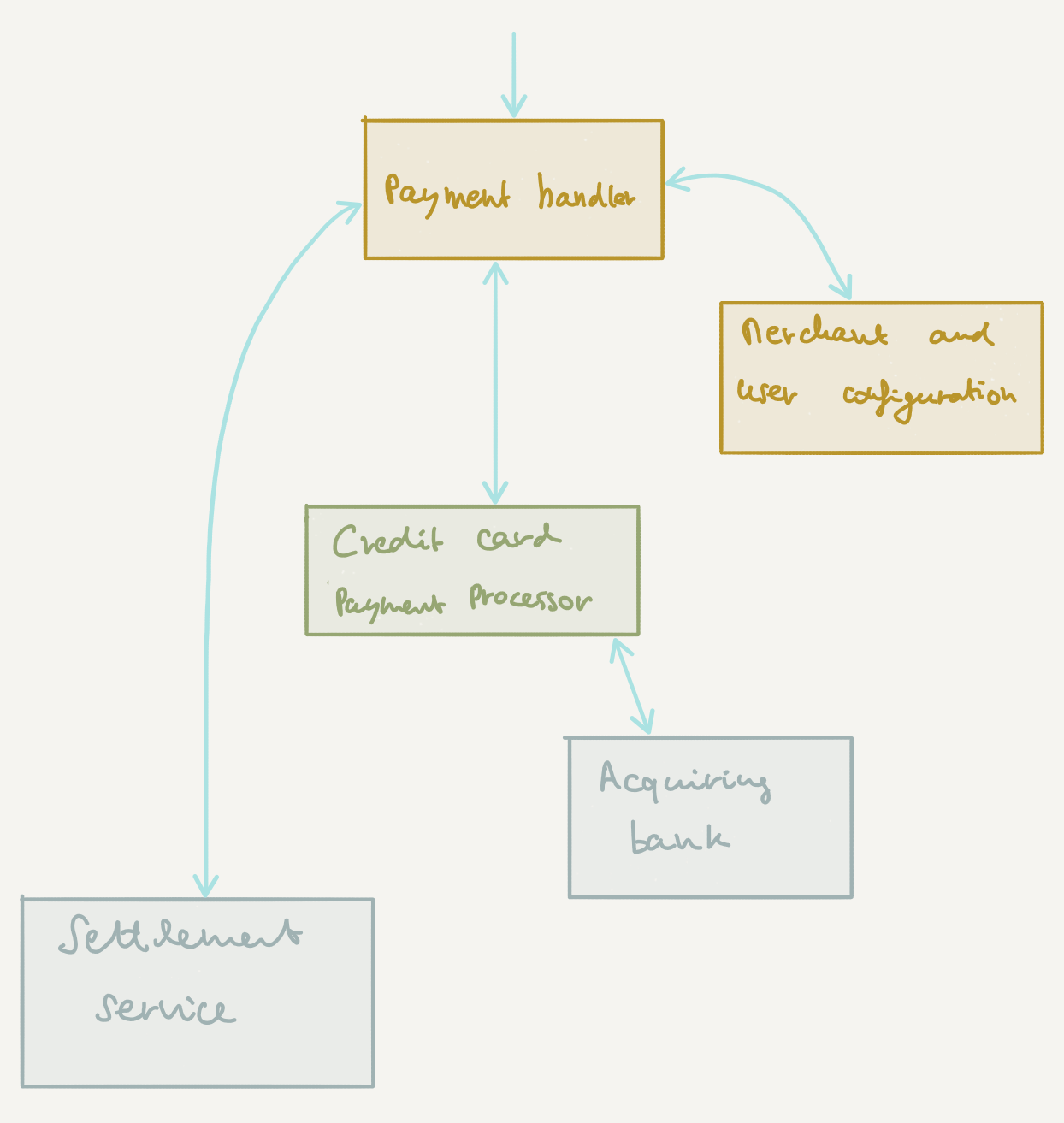

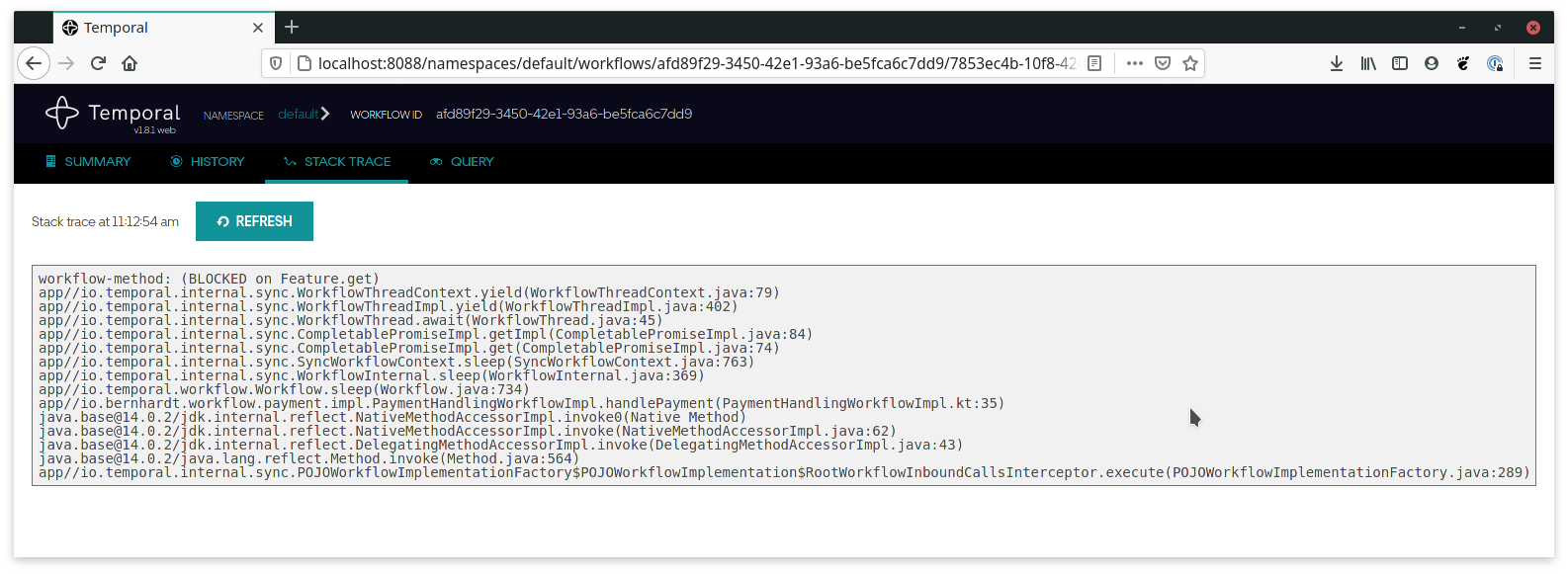

Temporal has a web UI that displays the execution of workflows. When running the docker-compose example, it is accessible on http://localhost:8088

This is quite useful to have around, not only during development but also when running those workflows to figure out exactly what happened during an execution. It also gives access to a virtual stack trace of the workflow execution which is a quite developer-friendly way of showing where a workflow is currently stuck. For example, if we were to pause the execution a bit with Worfklow.sleep prior to dispatching for settlement, we could observe the following trace:

This is particularly useful when investigating issues with a workflow execution, as it shows exactly at what point in user code the execution is stuck.

Summary

This is it for the first part of this series! You can find the source code for this example project on GitHub.

In the next part, we will be looking into one of my favorite topics: performance. In the following parts we will also explore other key concepts such as event handling via workflow signals, queries and scheduling as well as workflow versioning. Stay tuned!